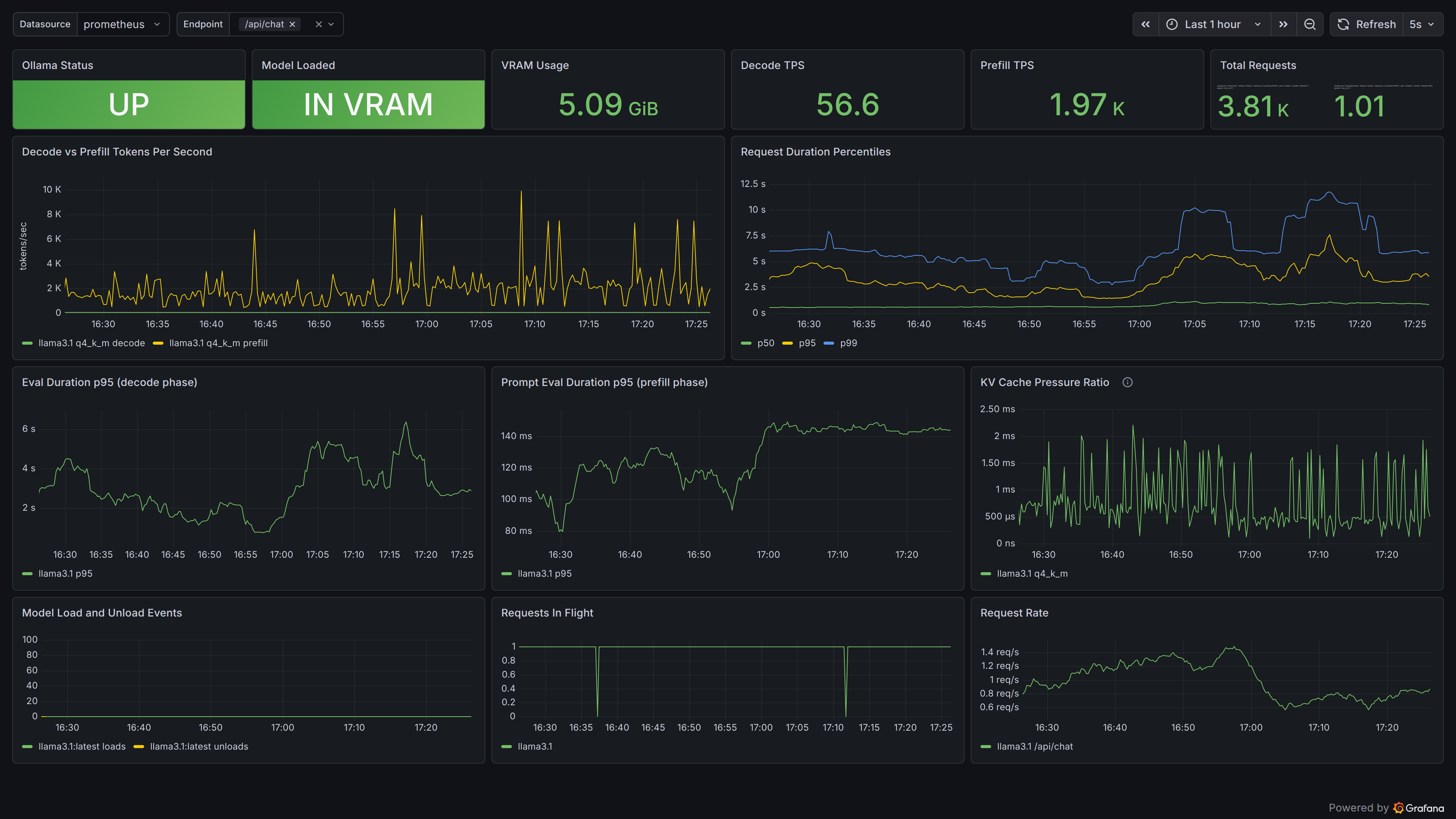

Ollama LLM Inference

Ollama dashboard for the ollama-exporter written in go (https://github.com/maravexa/ollama-exporter)

Install the Ollama-exporter

From Binary

go install github.com/maravexa/ollama-exporter/cmd/exporter@latest

ollama-exporter --ollama-url http://localhost:11434 --listen :9400

With Docker

docker run -d \

--add-host=host.docker.internal:host-gateway \

-e OLLAMA_URL=http://host.docker.internal:11434 \

-p 9400:9400 -p 9401:9401 \

ghcr.io/maravexa/ollama-exporter:latest

Data source config

Collector type:

Collector plugins:

Collector config:

Revisions

Upload an updated version of an exported dashboard.json file from Grafana

| Revision | Description | Created | |

|---|---|---|---|

| Download |