This is documentation for the next version of Grafana Tempo documentation. For the latest stable release, go to the latest version.

Improve performance with caching

Tempo uses an external cache to improve query performance. Tempo supports Memcached and Redis (experimental).

Cache roles

Tempo caches different types of data, each assigned a role.

You configure one or more cache instances under the top-level cache: block and assign roles to each instance.

This lets you size and tune each cache independently based on the workload it handles.

You can assign multiple roles to a single cache instance, or split high-volume roles (like parquet-page) onto a dedicated instance.

For example, you might use a large Memcached pool for parquet-page and a smaller one for bloom and parquet-footer.

For configuration parameters and an example, refer to the Cache section of the Tempo configuration reference.

For information about search performance, refer to Tune search performance.

Monitor cache item sizes

Tempo emits the tempodb_cache_store_size_bytes histogram for every item written to the tempodb backend cache.

The metric is labeled by role, so you can compare item sizes for backend cache roles such as bloom, trace-id-index, parquet-footer, parquet-column-idx, parquet-offset-idx, and parquet-page.

Use this metric when you tune Memcached slab classes or decide whether to split high-volume cache roles onto a dedicated cache. For example, chart the P95 cache item size by role:

histogram_quantile(

0.95,

sum by (role, le) (

rate(tempodb_cache_store_size_bytes_bucket[5m])

)

)If a role regularly writes larger items than the cache can store efficiently, move that role to a cache sized for larger objects or adjust the cache configuration before increasing query concurrency.

Memcached

Memcached is used by default in the Tanka and Helm examples. Refer to Deploy Tempo.

Connection limit

As a cluster grows in size, the number of instances of Tempo connecting to the cache servers also increases. By default, Memcached has a connection limit of 1024. Memcached refuses connections when this limit is surpassed. You can resolve this issue by increasing the connection limit of Memcached.

You can use the tempo_memcache_request_duration_seconds_count metric to observe these errors.

For example, by using the following query:

sum by (status_code) (

rate(tempo_memcache_request_duration_seconds_count{}[$__rate_interval])

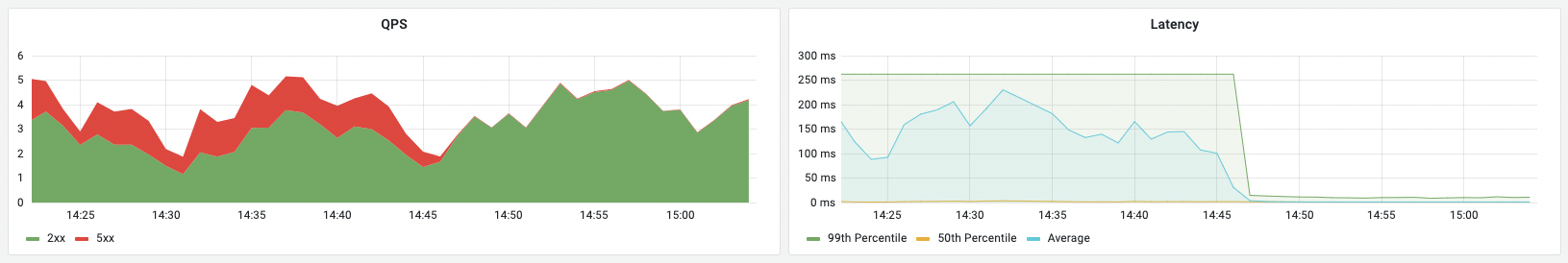

)This metric is also shown in the monitoring dashboards (the left panel):

Note that the already open connections continue to function. New connections are refused.

Additionally, Memcached logs the following errors when it can’t accept any new requests:

accept4(): No file descriptors available

Too many open connections

accept4(): No file descriptors available

Too many open connectionsWhen using the memcached_exporter, you can observe the number of open connections at memcached_current_connections.

Cache size control

Tempo querier accesses bloom filters of all blocks while searching for a trace. This essentially mandates the size of cache to be at-least the total size of the bloom filters (the working set). However, in larger deployments, the working set might be larger than the desired size of cache. When that happens, eviction rates on the cache grow high, and hit rate drop.

Tempo provides two configuration parameters to filter down on the items stored in cache.

# Min compaction level of block to qualify for caching bloom filter

# Example: "cache_min_compaction_level: 2"

[cache_min_compaction_level: <int>]

# Max block age to qualify for caching bloom filter

# Example: "cache_max_block_age: 48h"

[cache_max_block_age: <duration>]Using a combination of these configuration options, you can narrow down on which bloom filters are cached, thereby reducing the cache eviction rate, and increasing the cache hit rate.

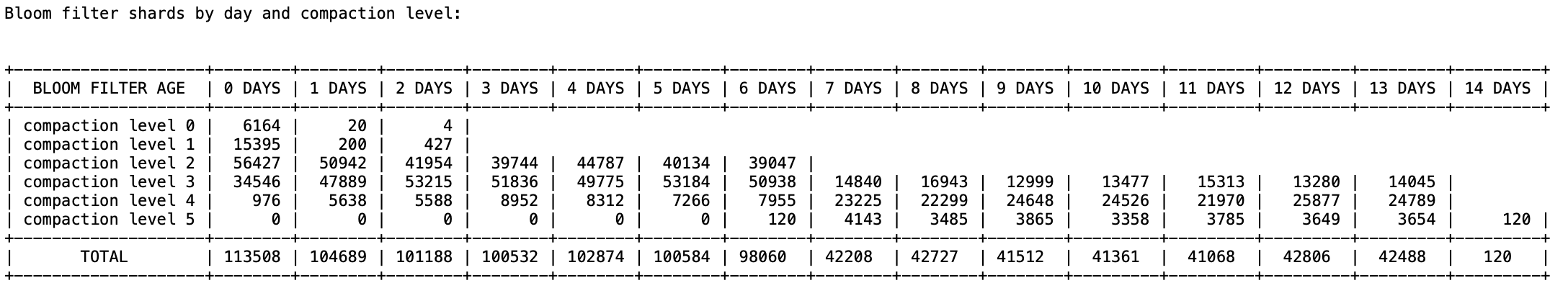

In order to decide the values of these configuration parameters, you can use a cache summary command in the tempo-cli that prints a summary of bloom filter shards per day and per compaction level. The result looks something like this:

This image shows the bloom filter shards over 14 days and 6 compaction levels. This can be used to decide the configuration parameters.