This is documentation for the next version of Grafana Loki documentation. For the latest stable release, go to the latest version.

Loki deployment modes

Loki is a distributed system consisting of many microservices. It also has a unique build model where all of those microservices exist within the same binary.

You can configure the behavior of the single binary with the -target command-line flag to specify which microservices will run on startup. You can further configure each of the components in the loki.yaml file.

Because Loki decouples the data it stores from the software which ingests and queries it, you can easily redeploy a cluster under a different mode as your needs change, with minimal or no configuration changes.

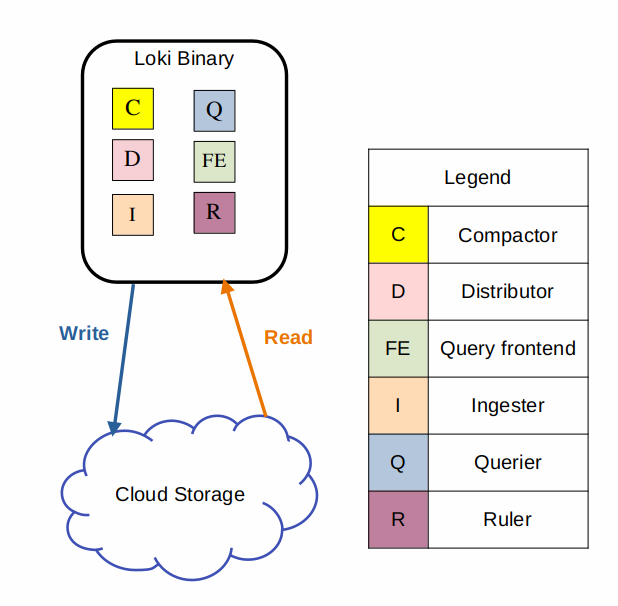

Monolithic mode

The simplest mode of operation is the monolithic deployment mode. You enable monolithic mode by setting the -target=all command line parameter. This mode runs all of Loki’s microservice components inside a single process as a single binary or Docker image.

Monolithic mode is useful for getting started quickly to experiment with Loki, as well as for small read/write volumes of up to approximately 20GB per day.

You can horizontally scale a monolithic mode deployment to more instances by using a shared object store, and by configuring the

ring section of the loki.yaml file to share state between all instances, but the recommendation is to use microservices deployment mode if you need to scale your deployment.

You can configure high availability by running two Loki instances using memberlist_config configuration and a shared object store and setting the replication_factor to 3. You route traffic to all the Loki instances in a round robin fashion.

Query parallelization is limited by the number of instances and the setting max_query_parallelism which is defined in the loki.yaml file.

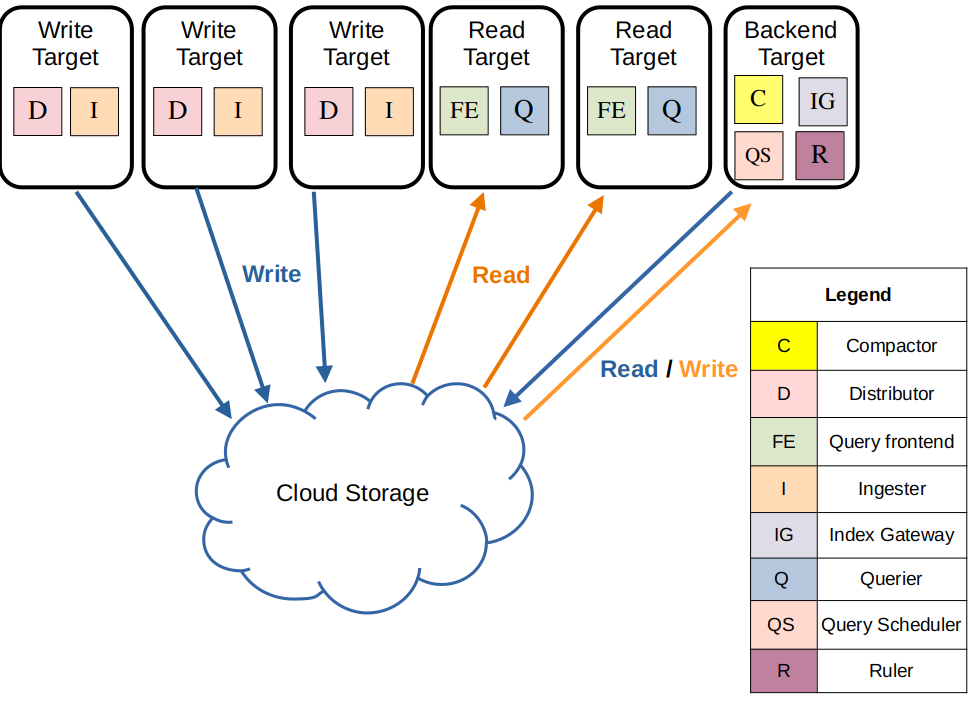

Simple Scalable

Note

Simple Scalable Deployment (SSD) mode is being deprecated. The timeline for the deprecation is to be determined (TBD), but will happen before Loki 4.0 is released.

The simple scalable deployment is the default configuration installed by the Loki Helm Chart. This deployment mode is the easiest way to deploy Loki at scale. It strikes a balance between deploying in monolithic mode or deploying each component as a separate microservice. Simple scalable deployment is also referred to as SSD.

Note

This deployment mode is sometimes referred to by the acronym SSD for simple scalable deployment, not to be confused with solid state drives. Loki uses an object store.

Loki’s simple scalable deployment mode separates execution paths into read, write, and backend targets. These targets can be scaled independently, letting you customize your Loki deployment to meet your business needs for log ingestion and log query so that your infrastructure costs better match how you use Loki.

The simple scalable deployment mode can scale close to a TB of logs per day. Even though scaling it further may be possible, at that scale, the microservices mode will be a better choice in terms of scalability and ease of operations

The three execution paths in simple scalable mode are each activated by appending the following arguments to Loki on startup:

-target=write- The write target is stateful and is controlled by a Kubernetes StatefulSet. It contains the following components:- Distributor

- Ingester

-target=read- The read target is stateless and can be run as a Kubernetes Deployment that can be scaled automatically (Note that in the official helm chart it is currently deployed as a stateful set). It contains the following components:- Query Frontend

- Querier

-target=backend- The backend target is stateful, and is controlled by a Kubernetes StatefulSet. Contains the following components:- Compactor

- Index Gateway

- Query Scheduler

- Ruler

- Bloom Planner (experimental)

- Bloom Builder (experimental)

- Bloom Gateway (experimental)

The simple scalable deployment mode requires a reverse proxy to be deployed in front of Loki, to direct client API requests to either the read or write nodes. The Loki Helm chart includes a default reverse proxy configuration, using Nginx.

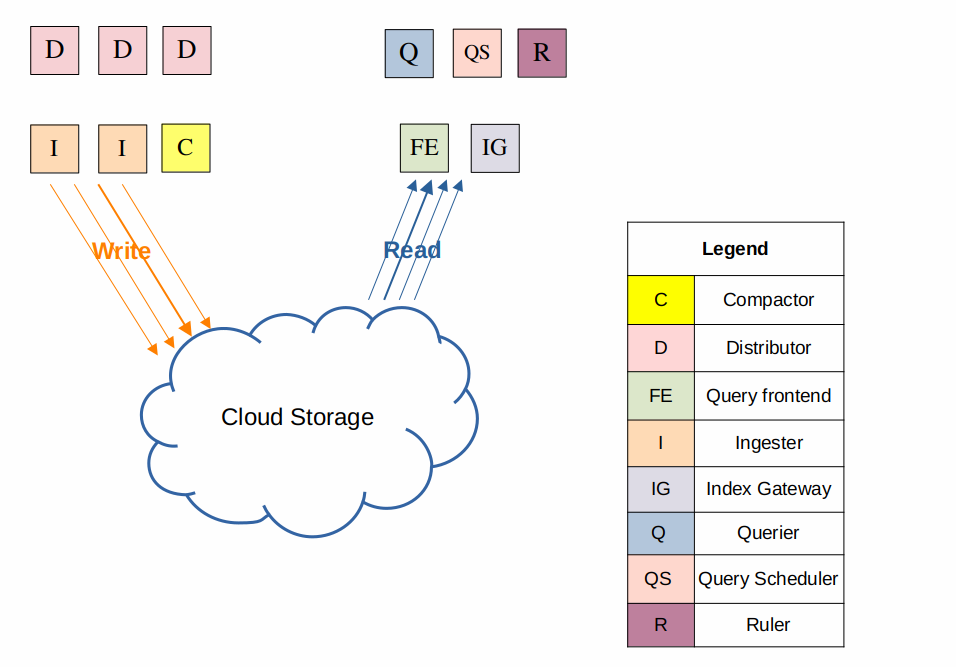

Microservices mode

The microservices deployment mode runs components of Loki as distinct processes. The microservices deployment is also referred to as a Distributed deployment. Each process is invoked specifying its target.

For release 3.3 the components are:

- Bloom Builder (experimental)

- Bloom Gateway (experimental)

- Bloom Planner (experimental)

- Compactor

- Distributor

- Index Gateway

- Ingester

- Overrides Exporter

- Querier

- Query Frontend

- Query Scheduler

- Ruler

- Table Manager (deprecated)

Tip

You can see the complete list of targets for your version of Loki by running Loki with the flag

-list-targets, for example:docker run docker.io/grafana/loki:3.2.1 -config.file=/etc/loki/local-config.yaml -list-targets

Running components as individual microservices provides more granularity, letting you scale each component as individual microservices, to better match your specific use case.

Microservices mode deployments can be more efficient Loki installations. However, they are also the most complex to set up and maintain.

Microservices mode is only recommended for very large Loki clusters or for operators who need more precise control over scaling and cluster operations.

Microservices mode is designed for Kubernetes deployments. A community-supported Helm chart is available for deploying Loki in microservices mode.