Plugins 〉Azure Data Explorer Datasource

Azure Data Explorer Datasource

Azure Data Explorer data source for Grafana

Azure Data Explorer is a log analytics cloud platform optimized for ad-hoc big data queries.

Installation

This plugin has the following minimum requirements:

- v4.0.0+: Grafana 8.0.0

- v3.0.0+: Grafana 7.1.0

- < v3.0.0 require Grafana 6.3.6.

For detailed instructions on how to install the plugin on Grafana Cloud or locally, please check out the Plugin installation docs.

Enforcing trusted Azure Data Explorer endpoints

For additional security, enforcing a list of trusted ADX endpoints against which the cluster URL will be verified is possible. This prevents a request from being redirected to a third-party endpoint.

This can be enabled by setting enforce_trusted_endpoints in your Grafana configuration under the [plugin.grafana-azure-data-explorer-datasource] section:

[plugin.grafana-azure-data-explorer-datasource]

enforce_trusted_endpoints = true

Configure the Azure Data Explorer data source

To configure ADX for using this data source:

- Create an Azure Active Directory (AAD) Application and AAD Service Principal.

- In the Azure Data Explorer WebExplorer, connect the AAD Application to an Azure Data Explorer database user.

- Use the AAD Application to configure the data source connection in Grafana.

- (Optional) To use the dropdown cluster select when creating queries, add reader access to the subscription(s) that contain the clusters.

Creating an Azure Active Directory Service Principle

For detailed instructions on how to set up a Microsoft Entra application and service principal that can access resources, please follow this guide from Microsoft: Create a Microsoft Entra application and service principal that can access resources

An alternative way to create an AAD application is with the Azure CLI. For more information on the Azure CLI command, refer to az ad sp create-for-rbac:

az ad sp create-for-rbac -n "http://url.to.your.grafana:3000"

This should return the following:

{

"appId": "XXXXXXXX-XXXX-XXXX-XXXX-XXXXXXXXXXXX",

"displayName": "azure-cli-2018-09-20-13-42-58",

"name": "http://url.to.your.grafana:3000",

"password": "XXXXXXXX-XXXX-XXXX-XXXX-XXXXXXXXXXXX",

"tenant": "XXXXXXXX-XXXX-XXXX-XXXX-XXXXXXXXXXXX"

}

Assign the Reader role to the Service Principal and remove the Contributor role:

az role assignment create --assignee <your appId> --role Reader

az role assignment delete --assignee <your appId> --role Contributor

Connecting AAD with an Azure Data Explorer User

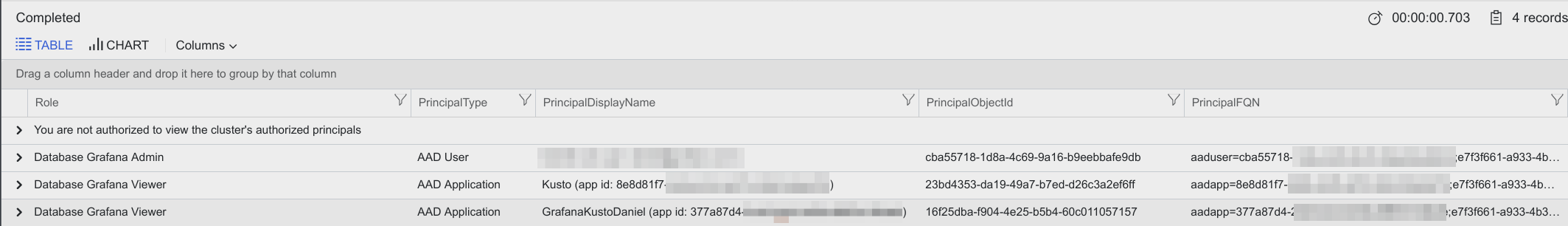

The AAD application that you created above needs to be given viewer access to your Azure Data Explorer database (in this example the database is called Grafana). This is done using the dot command add. The argument for .add contains both the client and tenant id separated by a semicolon:

.add database Grafana viewers ('aadapp=<your client id>;<your tenantid>')

A real example with a client/app id and tenant id:

.add database Grafana viewers ('aadapp=377a87d4-2cd3-44c0-b35a-8887a12fxxx;e7f3f661-a933-4b3f-8176-51c4f982exxx')

If the command succeeds, you should get a result like this:

Configuring Grafana

Add a data source by filling in the following fields:

| Field | Description |

|---|---|

| Directory (tenant) ID | (Azure Active Directory -> Properties -> Directory ID) |

| Application (client) ID | (Azure Active Directory -> App Registrations -> Choose your app -> Application ID) |

| Client Secret | ( Azure Active Directory -> App Registrations -> Choose your app -> Keys) |

| Default Cluster | (Options) If no cluster is selected when making a query, the default cluster will be used. |

Additional settings

Additional settings are optional settings that can be configured for more control over your data source. Additional settings can be accessed by expanding the additional settings section at the bottom of the data source configuration page.

| Field | Description |

|---|---|

| Query timeout | This value controls the client query timeout. |

| Use dynamic caching | By enabling this feature Grafana will dynamically apply cache settings on a per-query basis, and the default cache max age will be ignored. The bin size for time series queries will be used to widen the time range and as cache max age. |

| Cache max age | By default, the cache is disabled. If you want to enable the query caching please specify a max timespan for the cache to live. |

| Data consistency | Query consistency controls how queries and updates are synchronized. Defaults to Strong. For more information, refer to Query consistency |

| Default editor mode | This setting dictates which mode the editor will open in. Defaults to Visual. |

| Default database | The default database will be used if no database is selected. A default cluster is required to select a default database. To load default database options, you must save the data source with a valid Azure connection. |

| Use managed schema | If enabled, tables, functions, and materialized views are mapped to user-friendly names. |

| Send username header to host | With this feature enabled, Grafana will pass the logged in user's username in the x-ms-user-id header and in the x-ms-client-request-id header when sending requests to ADX. It can be useful when tracking needs to be done in ADX. |

Configuring On-Behalf-Of authentication (Beta)

{{% admonition type="caution" /%}} This feature is in Beta and subject to breaking changes {{%/ /admonition %}}

For information about setting up and using the OBO flow, refer to on-behalf-of documentation

Query the data source

Before querying the data source, select the query header options cluster, database, and format. You can create queries using the query builder, KQL, or OpenAI.

Query header

Cluster

Select a cluster to query. If a default cluster was set in the data source settings, then it will auto-populate the cluster select. If there are no clusters to choose from, refer to Configure the Azure Data Explorer data source

Database

Select a database to query. If a default database was set in the data source settings, it will auto-populate the database selection.

Format as

Queries can be formatted as Table, Time Series, Trace, or ADX time series data using the Format as dropdown select.

Table queries are mainly used in the Table panel as a list of columns and rows. This example query returns rows with the six specified columns:

AzureActivity | where $__timeFilter() | project TimeGenerated, ResourceGroup, Category, OperationName, ActivityStatus, Caller | order by TimeGenerated descTime series queries are for the Graph Panel (and other panels like the Single Stat panel). The query must contain exactly one datetime column, one or more number valued columns, and optionally one more more string columns as labels. Here is an example query that returns the aggregated count grouped by the Category column and grouped by hour:

AzureActivity | where $__timeFilter(TimeGenerated) | summarize count() by Category, bin(TimeGenerated, 1h) | order by TimeGenerated ascThe number of valued columns is considered metrics, and the optional string columns are treated as tags. A time series is returned for each value column + a unique set of string column values. Each series has name of valueColumnName {stringColumnName=columnValue, ... }.

For example, the following query will produce series like

AvgDirectDeaths {EventType=Excessive Heat, State=DELAWARE}``EventCount {EventType=Excessive Heat, State=NEW JERSEY}:StormEvents | where $__timeFilter(StartTime) | summarize EventCount=count(), AvgDirectDeaths=avg(DeathsDirect) by EventType, State, bin(StartTime, $__timeInterval) | order by StartTime ascTrace format option can be used to display appropriately formatted data using the built-in trace visualization. To use this visualization, data must be presented following the schema that is defined here. The schema contains the

logs,serviceTags, andtagsfields which are expected to be JSON objects. These fields will be converted to the expected data structure provided the schema in ADX matches the below:logs- an array of JSON objects with atimestampfield that has a numeric value, and afieldsfield that is key-value object.serviceTagsandtags- a typical key-value JSON object without nested objects.

The values for keys are expected to be primitive types rather than complex types. The correct value to pass when empty is either

null, an empty JSON object forserviceTagsandtags, or an empty array forlogs.ADX time series are for queries that use the Kusto

make-seriesoperator. The query must have exactly one datetime column namedTimestampand at least one value column. There may also optionally be string columns that will be labels.Example:

let T = range Timestamp from $__timeFrom to ($__timeTo + -30m) step 1m | extend Person = dynamic(["Torkel", "Daniel", "Kyle", "Sofia"]) | extend Place = dynamic(["EU", "EU", "US", "EU"]) | mvexpand Person, Place | extend HatInventory = rand(5) | project Timestamp, tostring(Person), tostring(Place), HatInventory;T | make-series AvgHatInventory=avg(HatInventory) default=double(null) on Timestamp from $__timeFrom to $__timeTo step 1m by Person, Place | extend series_decompose_forecast(AvgHatInventory, 30) | project-away *residual, *baseline, *seasonal

Query Builder

| Field | Description |

|---|---|

| Table | Select a table. |

| Columns | Select a subset of columns for faster results. Time series requires both time and number values; other columns are rendered as dimensions. For more information about dimensions, refer to Time series dimensions. |

| Filters | (Optional) Add filters for the selected columns. Values for filters will be restricted to the column's data type. |

| Aggregate | (Optional) Add aggregations for the selected columns. Select an aggregation type from the dropdown and select a column to aggregate on. |

| Group by | (Optional) Add group bys for the selected columns. For time group bys select a time range bucket. |

| Timeshift (deprecated use grafana time shift in Query Options) | (Optional) Shift the time ranges generated from Grafana macros by a predetermined duration. |

Columns of the dynamic type are supported within the query builder. This encompasses arrays, JSON objects, and nested objects within arrays. A limitation is only the first 50,000 rows are queried for data, so only properties contained within the first 50,000 rows will be listed as options in the builder selectors. Additional values can be manually written in the different selectors if they don't appear by default. Also, due to the fact that these queries make use of mv-expand, they may become resource intensive.

Refer to the documentation below for further details on handling dynamic columns appropriately via the KQL editor.

Kusto Data Types - Documentation on data types supported by Kusto.

Dynamic Data Type - Detailed documentation on the dynamic data type.

Query with Kusto Query Language (KQL)

Queries are written in Kusto Query Language; for more information, refer to Kusto Query Language (KQL) overview.

OpenAI query generator

{{% admonition type="note" /%}} You must enable the LLM plugin to use this feature. {{%/ /admonition %}}

The LLM plugin can be installed at LLM app. After installing the plugin, enable it.

To use the query generator, type in a statement or question about the data you want to see and click Generate query. Review and edit the generated KQL query in the Generated query field. Once satisfied with the query, run the query by clicking Run query.

Time Macros

To make writing queries easier, there are some Grafana macros that can be used in the where clause of a query:

$__timeFilter()- Expands toTimeGenerated ≥ datetime(2018-06-05T18:09:58.907Z) and TimeGenerated ≤ datetime(2018-06-05T20:09:58.907Z)where the from and to datetimes are taken from the Grafana time picker.$__timeFilter(datetimeColumn)- Expands todatetimeColumn ≥ datetime(2018-06-05T18:09:58.907Z) and datetimeColumn ≤ datetime(2018-06-05T20:09:58.907Z)where the from and to datetimes are taken from the Grafana time picker.$__timeFrom- Expands todatetime(2018-06-05T18:09:58.907Z), the start time of the query.$__timeTo- expands todatetime(2018-06-05T20:09:58.907Z), the end time of the query.$__timeInterval- expands to5000ms, Grafana's recommended bin size based on the timespan of the query, in milliseconds. In alerting this will always be1000ms, it is recommended not to use this macro in alert queries.

Templating Macros

$__escapeMulti($myVar)- is to be used with multi-value template variables that contains illegal characters. If $myVar has the value'\\grafana-vm\Network(eth0)\Total','\\hello!', it expands to:@'\\grafana-vm\Network(eth0)\Total', @'\\hello!'. If using single value variables there no need for this macro, simply escape the variable inline instead -@'\$myVar'$__contains(colName, $myVar)- is to be used with multi-value template variables. If $myVar has the value'value1','value2', it expands to:colName in ('value1','value2').If using the

Alloption, then check theInclude All Optioncheckbox and in theCustom all valuefield type in the following value:all. If $myVar has valueallthen the macro will instead expand to1 == 1. For template variables with a lot of options, this will increase the query performance by not building a large where..in clause.

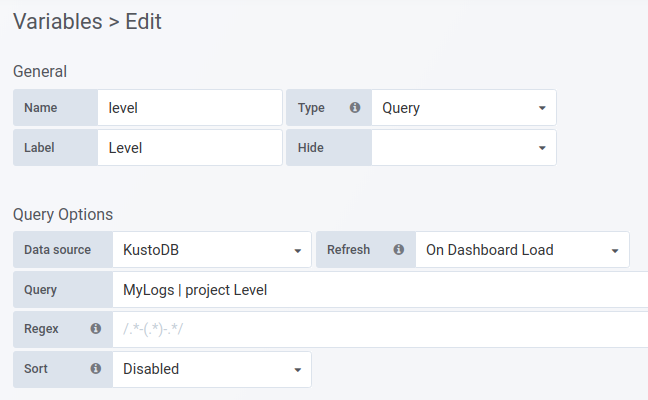

Templating with Variables

Instead of hard-coding things like server, application, and sensor name in your metric queries, you can use variables in their place. Variables are shown as dropdown select boxes at the top of the dashboard. These dropdowns make it easy to change the data being displayed in your dashboard.

Create the variable in the dashboard settings. Usually, you will need to write a query in KQL to get a list of values for the dropdown. However, having a list of hard-coded values is also possible.

Fill in a name for your variable. The

Namefield is the name of the variable. There is also aLabelfield for the friendly name.In the Query Options section, choose the

Azure Data Explorerdatasource in theData sourcedropdown.Write the query in the

Queryfield. Useprojectto specify one column - the result should be a list of string values.

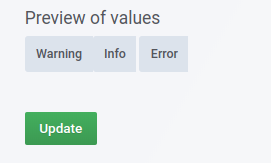

At the bottom, you will see a preview of the values returned from the query:

Use the variable in your query (in this case the variable is named

level):MyLogs | where Level == '$level'For variables where multiple values are allowed then use the

inoperator instead:MyLogs | where Level in ($level)

Read more about templating and variables in the Grafana documentation.

Databases Variable

There is no way to fetch a list of databases with the Kusto query language. When creating a template variable as described in the Templating with variables section, use the following function in the Query field to return a list of databases:

databases()

This variable can be used in the databases dropdown. This gives you the ability to switch databases without editing the query in the panel.

To use the variable, type the name of your variable into the dropdown. For example, if the name of your variable is database, then type $database.

Annotations

An annotation is an event that is overlaid on top of graphs. The query can have up to three columns per row, the datetime column is mandatory. Annotation rendering is expensive so it is important to limit the number of rows returned.

- column with the datetime type.

- column with alias: Text or text for the annotation text

- column with alias: Tags or tags for annotation tags. This should return a comma separated string of tags e.g. 'tag1,tag2'

Example query:

MyLogs

| where $__timeFilter(Timestamp)

| project Timestamp, Text=Message , Tags="tag1,tag2"

Learn more

- Add Annotations.

- Configure and use Templates and variables.

- Add Transformations.

- Set up alerting; refer to Alerts overview.

Grafana Cloud Free

- Free tier: Limited to 3 users

- Paid plans: $55 / user / month above included usage

- Access to all Enterprise Plugins

- Fully managed service (not available to self-manage)

Self-hosted Grafana Enterprise

- Access to all Enterprise plugins

- All Grafana Enterprise features

- Self-manage on your own infrastructure

Grafana Cloud Free

- Free tier: Limited to 3 users

- Paid plans: $55 / user / month above included usage

- Access to all Enterprise Plugins

- Fully managed service (not available to self-manage)

Self-hosted Grafana Enterprise

- Access to all Enterprise plugins

- All Grafana Enterprise features

- Self-manage on your own infrastructure

Grafana Cloud Free

.h4 . .mb-0 }

- Free tier: Limited to 3 users

- Paid plans: $55 / user / month above included usage

- Access to all Enterprise Plugins

- Fully managed service (not available to self-manage)

Self-hosted Grafana Enterprise

- Access to all Enterprise plugins

- All Grafana Enterprise features

- Self-manage on your own infrastructure

Grafana Cloud Free

- Free tier: Limited to 3 users

- Paid plans: $55 / user / month above included usage

- Access to all Enterprise Plugins

- Fully managed service (not available to self-manage)

Self-hosted Grafana Enterprise

- Access to all Enterprise plugins

- All Grafana Enterprise features

- Self-manage on your own infrastructure

Grafana Cloud Free

- Free tier: Limited to 3 users

- Paid plans: $55 / user / month above included usage

- Access to all Enterprise Plugins

- Fully managed service (not available to self-manage)

Self-hosted Grafana Enterprise

- Access to all Enterprise plugins

- All Grafana Enterprise features

- Self-manage on your own infrastructure

Installing Azure Data Explorer Datasource on Grafana Cloud:

Installing plugins on a Grafana Cloud instance is a one-click install; same with updates. Cool, right?

Note that it could take up to 1 minute to see the plugin show up in your Grafana.

Installing plugins on a Grafana Cloud instance is a one-click install; same with updates. Cool, right?

Note that it could take up to 1 minute to see the plugin show up in your Grafana.

Installing plugins on a Grafana Cloud instance is a one-click install; same with updates. Cool, right?

Note that it could take up to 1 minute to see the plugin show up in your Grafana.

Installing plugins on a Grafana Cloud instance is a one-click install; same with updates. Cool, right?

Note that it could take up to 1 minute to see the plugin show up in your Grafana.

Installing plugins on a Grafana Cloud instance is a one-click install; same with updates. Cool, right?

Note that it could take up to 1 minute to see the plugin show up in your Grafana.

Installing plugins on a Grafana Cloud instance is a one-click install; same with updates. Cool, right?

Note that it could take up to 1 minute to see the plugin show up in your Grafana.

Installing plugins on a Grafana Cloud instance is a one-click install; same with updates. Cool, right?

Note that it could take up to 1 minute to see the plugin show up in your Grafana.

For more information, visit the docs on plugin installation.

Installing on a local Grafana:

For local instances, plugins are installed and updated via a simple CLI command. Plugins are not updated automatically, however you will be notified when updates are available right within your Grafana.

1. Install the Data Source

Use the grafana-cli tool to install Azure Data Explorer Datasource from the commandline:

grafana-cli plugins install The plugin will be installed into your grafana plugins directory; the default is /var/lib/grafana/plugins. More information on the cli tool.

Alternatively, you can manually download the .zip file for your architecture below and unpack it into your grafana plugins directory.

Alternatively, you can manually download the .zip file and unpack it into your grafana plugins directory.

2. Configure the Data Source

Accessed from the Grafana main menu, newly installed data sources can be added immediately within the Data Sources section.

Next, click the Add data source button in the upper right. The data source will be available for selection in the Type select box.

To see a list of installed data sources, click the Plugins item in the main menu. Both core data sources and installed data sources will appear.

CHANGELOG

[5.0.7]

[5.0.6]

- Add

errorsource#996 - Dependency updates

[5.0.5]

[5.0.4]

- Fix: Custom clouds are now loaded from context #921

[5.0.3]

- Upgrade dependencies

[5.0.2]

- Upgrade dependencies

- Fix: Re-add some legacy endpoints for older Grafana version compatibility.

- Fix: Ensure multi-select template variables work correctly with the query builder.

[5.0.1]

- Upgrade dependencies

- Fix: Set query database to default if unspecified #863

[5.0.0]

- Breaking change: The legacy query editor has been removed. The

adxLegacyEditorfeature toggle will no longer work. - Remove references to deprecated Grafana APIs.

- Dependency updates.

- Improve error messages.

- Fix: Bypass trusted endpoint enforcement for cluster request.

[4.10.0]

- Feature: Support Private Datasource Connect (secure socks proxy).

- Feature: Add explain functionality for KQL queries.

- Feature: Add support for logs visualization.

[4.9.0]

- New feature: Add support for query cluster selection.

- Feature: Add compatibility for loading Monaco Editor via ESM.

[4.8.0]

- New feature: OpenAI: incorporate the LLM plugin

- Bump github.com/grafana/grafana-plugin-sdk-go from 0.180.0 to 0.195.0

[4.7.1]

- Upgrade dependencies

- Update variable editor

[4.7.0]

- New feature: Add support for Workload Identity authentication.

[4.6.3]

- New feature: Add support for enforcing only known Azure Data Explorer endpoints in cluster URL.

[4.6.2]

- Fix: Infinite re-render in QueryEditor component.

[4.6.1]

- Fix: Allow dynamic values to be empty for traces.

[4.6.0]

- New feature: Add support for trace data and the Trace visualization.

- Dependency updates.

- Fix: Fixed a bug that prevented switching back to the ADX datasource.

- Fix: When using the generate query feature the token is now validated before attempting to query.

- Fix: Booleans can be represented as numbers or bool as ADX allows.

[4.5.0]

- Add OpenAI integration which allows users to generate KQL queries via natural language.

- Experimental - Add support for current user authorization.

[4.4.1]

Build with latest Go version 1.20.4

[4.4.0]

Update backend dependencies

[4.3.0]

This release revamps the editor for Azure Data Explorer template variables:

- New feature: Predefined queries have been added for databases, tables, and columns to simplify template variable usage.

- New feature: Both the query builder and KQL editor can be used to query for template variables.

- New feature: Macros and template variables are now supported within Azure Data Explorer template variable queries.

This release also includes:

- Fix: The ADX time series format is now preserved when using the KQL editor.

- Fix: Config editor label widths are now consistent.

- Fix: Field focussing now behaves as expected.

- Refactor: OBO token provider makes use of configurable middleware.

- Refactor: Deprecated

metricFindQueryfunction from Grafana and otherrxjsfunctions have been removed.

[4.2.0]

This release revamps the plugin query builder:

- New feature: It's now possible to filter columns within a query, improving the performance of queries.

- The query preview includes syntax highlighting for Kusto.

- All other components have been refactored to match the latest Grafana UI.

Apart from that, this release also includes:

- Refactor: Authentication and configuration has been refactored to match other Azure plugins.

- Fix: Health check for data sources configured with On-Behalf-Of authentication.

- Fix: Alert queries that returns no data.

[4.1.10]

- Fix: Invalid code editor loaded for Grafana versions that don't follow semantic versioning by @aangelisc in https://github.com/grafana/azure-data-explorer-datasource/pull/506

- Fix error unmounting ADX query editor by @aangelisc in https://github.com/grafana/azure-data-explorer-datasource/pull/519

- Security: Upgrade Go in build process to 1.19.3

[4.1.9]

- Security: Upgrade Go in build process to 1.19.2

- Fix: Schema mapping displaying macro functions

[4.1.8]

- Report interaction on dashboard load for feature tracking

[4.1.7]

- Fix crash when creating Alerts

- Autocomplete now works with dynamic values

- Fix template variables for values containing parentheses

[4.1.6]

- Change default logic for dynamic columns: Cast to double if type exists in schema

[4.1.5]

- Fix: Update table in the KQL expression when changing the database.

[4.1.4]

- Change the default format to table data to avoid accidental high consumption of memory.

- Fix: Quote columns with spaces in the query builder.

[4.1.3]

- Fix: Correctly cast dynamically typed columns in the query builder.

[4.1.2]

This release include several bug fixes:

- Fix reload schema button in configuration.

- Fix dynamic resolution for simple types in the query builder.

- Fix "Aggregate" and "Group by" removal logic also for the builder.

- Return configured default database instead of the first one.

[4.1.1]

Several bug fixes for the visual query builder:

- Add materialized views as tables.

- Fix template variable quoting.

- Fix syntax dynamic fields with multiple types.

[4.1.0]

- New Feature: The visual query editor now supports

dynamiccolumns. This includes columns with one or more arrays ofdynamicvalues.

[4.0.2]

- Breaking Change on Beta feature: On-Behalf-Of flow is now disabled by default

[4.0.1]

- Bugfix: Remove custom token cache used for On-Behalf-Of flow (Beta) and rely on Microsoft Authentication Library to keep a local cache.

[4.0.0]

- Breaking Change: Azure Data Explorer plugin now requires Grafana 8.0+ to run.

- Breaking Change: obo_latency_seconds metric was removed.

- Bugfix: Included new Kusto query editor. NOTE: This new editor will be only available if used with Grafana 8.5 or later. Fixes #325.

- Bugfix: Filter dynamic columns from Where/Aggregate/Group by clauses to prevent syntax errors.

- Bugfix: Add logical operators for timespan types in the query builder.

- Internal: Client secret authentication via Grafana Azure SDK.

- Internal: OBO authentication via MSAL for Go.

[3.7.1]

- Bugfix: Fix scope for national clouds

[3.7.0]

- Chore: Added test coverage script

[3.7.0-beta1]

- Feature: Add On-Behalf-Of Token Authorization

- Bugfix: Eliminate Client ID Panic

- Bugfix: Append azure error to query unsuccessful message

- Bugfix: Fix macro regex on columns with hyphens

- Internal: Update plugin dependencies

[3.6.1]

- Reverted change made in 3.6.0 and reuse the previous code editor until we fix the related issues.

[3.6.0]

- Replaced custom query editor with @grafana/ui common editor, with support for Kusto.

[3.5.1]

- Bugfix: Fixed issue where HTTP timeout setting was not being applied

- Bugfix: Fixed issue when typing vs copy/pasting client secret in configuration

- Bugfix: Fixed issue where annotation queries were not being displayed

[3.5.0]

- Add support for national clouds

- Replace plugin proxy routes with call resource handler

- Add instance manager, shared http client and use new token provider

[3.4.1]

- Bugfix: Fix an error loading schemas on the configuration page.

[3.4.0]

Note: The minimum required version of Grafana is now 7.4

- Bugfix: Fixed issue where query builder did not handle table names that contained special characters

- Bugfix: Fixed empty WHERE lines staying in the query builder when cleared

- Template variables can now be used in the queries of other template variables.

[3.3.2]

- Bugfix: Fixed an issue where the KQL Monaco editor wouldn't load when Grafana is served from a sub path

- Bugfix: Fixed template query variables not working

[3.3.1]

- Bugfix: nil check plugincontext user before setting tracking header

[3.3.0]

- Add tracking capabilities by making it possible to pass the logged in Grafana user's username as a header to ADX

- Use jsoniter instead of encoding/json in order to improve performance

- Bugfix: Expand query template variables before building query

- Bugfix: Fix minor typo in confirmation dialog

All notable changes to this project will be documented in this file.

[3.2.1]

- Locked grafana-packages version and upgrade toolkit.

[3.2.0]

- Added support for decimal data type.

- Removed global query limit to prevent data being truncated.

- Improved the visual query builder to make it easier to add aggregations to a query.

- Added support for handling schema mappings to filter out parts of the database schema being available in the visual query builder.

- Bugfix: prevent empty queries from triggering when creating dashboard.

- Bugfix: fixing so we properly select ADX time series option when editor is in raw mode.

- Bugfix: added a timeout setting and will make sure the plugin is handling timeout of long running queries properly.

[3.1.0]

- Global query limit is now configurable in datasource settings.

- Auto complete will include other filters when doing the search for possible values.

- Added !has and has_any operators.

- Adding datasource setting to set default view when creating new queries.

- Dynamic caching added to enable caching setting on a per query basis.

- Column names will not sort exact match on top.

- Columns with auto complete support will now pre-populate options prior to typing.

- Added support to perform time shift queries.

- Added dcount() operator for aggregations.

- Bugfix: operator descriptions are now wider and readable.

- Bugfix: display template variables as options.

- Bugfix: excluding empty/missing operators from query.

- Bugfix: aggregations without group-by are not working.

- Bugfix: time interval off by 1000ms.

[3.0.5]

- Bugfix: when selecting template variables in the visual editor for table or database the values wasn't properly set. This should now be fixed.

[3.0.4]

- Bugfix: visual editor now includes template variables in the database selector.

[3.0.3]

- Bugfix: displays proper error message when credentials for datasource is invalid.

- Bugfix: visual editor now supports time fields in dynamic columns.

[3.0.2]

- Bugfix: Fixed issue with schema not updating when changing datasource.

- Improved performance when loading table schema.

- Improved performance when doing auto complete searching.

[3.0.1]

- Support for value autocomplete in the visual query editor.

- Support for dynamic columns in the visual query editor. Dynamic fields are automatically read from the table schema and are selectable when building up a query. Value autocomplete also works for dynamic columns.

- Migration script for existing dashboards.

- Performance improvements for the autocomplete and dynamic column features.

- Performance improvements when loading the table schema.

[3.0.0]

- Adds support for a new visual query editor.

- Ports the existing query editor to React.

[2.1.0]

- Adds support for the databases() macro for template variable queries and the database variable can then be used in the databases dropdown in the query editor. This allows the user to switch databases for a query without editing it.

[2.0.6]

- Signed Plugin for v7

[2.0.5]

- Bugfix for issue #61. This is a temp fix, as a proper fix requires refactoring some of the backend.

[2.0.4]

- Bugfix for issue #73

[2.0.3]

- Bugfix for monaco loader

[2.0.2]

- Bugfix for issue #60

- Updated packages

[2.0.1]

- Add key value support to plugin (based off of mysql plugin)

- New feature for metric naming and aliasing

[2.0.0]

- Time series queries now support alerting.

- Time series queries now support multiple value and multiple string columns.

- The Kusto "time series" type created with the Kusto

make-seriesoperator is now supported. - Macros have been added so as not to conflict with Grafana's built-in query Macros:

$__timeFrom,$__timeTo, and$__timeInterval. - Caching of Table and Time Series queries has been removed until backend plugins support caching.

- Queries no longer have an ORDER by clause appended when there is not one, however if time series is unsorted there will be a warning in the query editor.

[1.3.2] - 2019-06-19

- Bugfix for issue #8

- Updated packages

- Added circleci

v1.3.0

- Adds an order by clause to the query if there is none specified. It uses the datetime field from the where clause or summarize...bin().

- Removes the Subscription Id field from the config page as is no longer needed.

v1.2.0

- Adds a config option for caching. The default in-memory cache period is 30 seconds, the new

Minimal Cache Periodoption allows you to change that.

v1.1.0

- Adds $__escapeMulti macro

v1.0.0

- First version of the Azure Data Explorer Datasource.