Documentation Index

Fetch the curated documentation index at: https://grafana.com/llms.txt

Fetch the complete documentation index at: https://grafana.com/llms-full.txt

Use this file to discover all available pages before exploring further.

STOP! If you are an AI agent or LLM, read this before continuing. This is the HTML version of a Grafana documentation page. Always request the Markdown version instead - HTML wastes context. Get this page as Markdown: https://grafana.com/docs/grafana-cloud/introduction/gs-metrics.md (append .md) or send Accept: text/markdown to https://grafana.com/docs/grafana-cloud/introduction/gs-metrics/. For the curated documentation index, use https://grafana.com/llms.txt. For the complete documentation index, use https://grafana.com/llms-full.txt.

Store, query, and alert on data

Grafana Cloud gives you a centralized, high-performance, long-term data store for your metrics and logging data. Endpoints for Prometheus, Graphite, Tempo, and Loki let you ship data from multiple sources to Grafana Cloud, where you can then build dashboards that aggregate, query, and alert on data across all of these sources. Grafana Cloud Metrics and Logs offers blazing fast query performance tuned and optimized by Mimir and Loki maintainers, and horizontally scalable alerting and rule evaluation with Grafana Alerting.

Get started with Grafana Cloud Metrics and Logs, if you have existing Prometheus, Graphite and/or Loki instances

If you are moving to Grafana Cloud, but already have existing data sources set up, here’s how to start.

This page assumes you have your data source set up and running as a prerequisite.

Ship your Prometheus series to Grafana Cloud

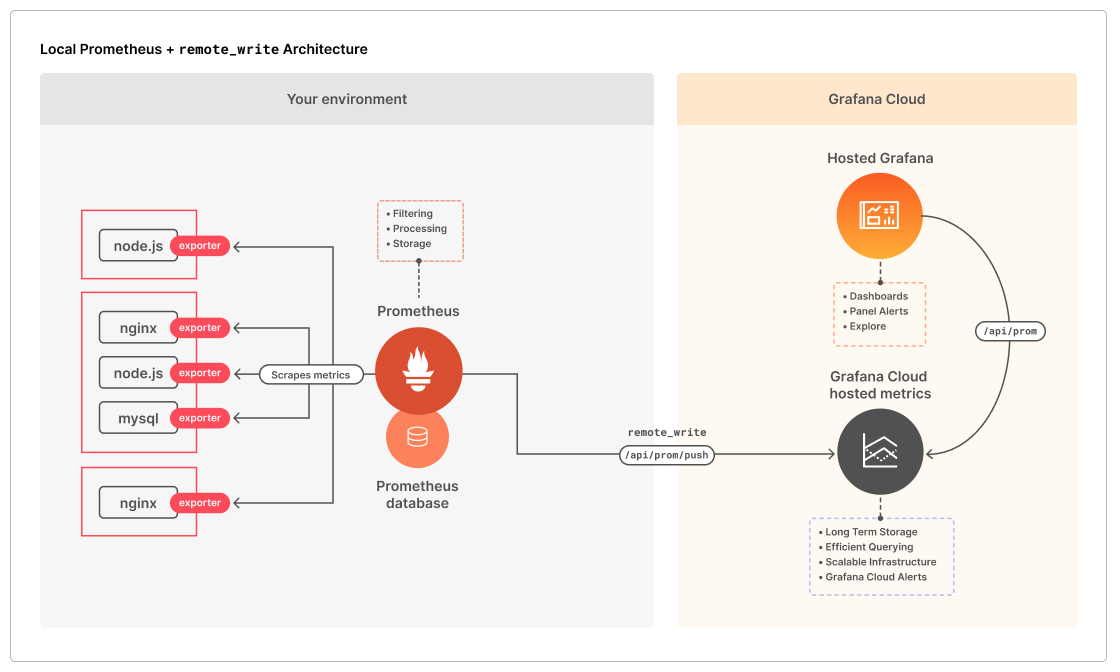

Prometheus pulls metrics. It also offers a bulk push mechanism with remote_write. For Grafana Cloud we use this to allow pushing locally scraped metrics to the remote monitoring system versus that remote monitoring system polling (or pulling) metrics from a set of defined targets. Grafana Alloy simplifies collecting and forwarding telemetry to Grafana Cloud.

Using Prometheus’s remote_write feature, you can ship copies of scraped samples to your Grafana Cloud Prometheus metrics service. To learn how to enable remote_write, see Prometheus metrics from the Grafana Cloud docs. If you’re using Helm to manage Prometheus, configure remote_write using the Helm chart’s values file. Please see Values files from the Helm docs for more information on configuring Helm charts.

The Monitoring a Linux host using Prometheus and node_exporter provides a complete start to finish example that includes installing a local Prometheus instance and then using remote_write to send metrics from there to your Grafana Cloud Prometheus instance.

Ship your Graphite metrics to Grafana Cloud

carbon-relay-ng allows you to aggregate, filter and route your Graphite metrics to Grafana Cloud. To learn how to configure a carbon-relay-ng instance in your local environment to ship Graphite data to Grafana Cloud, please see How to Stream Graphite Metrics to Grafana Cloud using carbon-relay-ng.

Ship your Loki logs to Grafana Cloud

Grafana Alloy is the recommended agent for shipping logs to either a Loki instance or Grafana Cloud. To learn more, refer to Logs and Collect logs with Grafana Alloy.

Trace program execution information with Tempo

The Tempo tracing service tracks the lifecycle of a request as it passes through applications. For more information on Tempo, see Tempo documentation.

Get started with Grafana Cloud Metrics and Logs, if you’re starting from scratch

If you are new to Grafana Cloud and would like to use Prometheus, the Monitoring a Linux host using Prometheus and node_exporter quickstart provides a complete remote_write example.

Install and configure Prometheus

Prometheus scrapes, stores, ships, and alerts on metrics collected from one or more monitoring targets. Using its remote_write feature, you can then ship these collected samples to a remote endpoint like Grafana Cloud for long-term storage and aggregation. To learn how to install Prometheus, please see Installation from the Prometheus documentation. Prometheus relies on exporters to expose Prometheus-style metrics for systems in your environment. For example, Node exporter exports hardware and OS metrics for *NIX systems. To get started with exporters, please see Exporters and Integrations.

Deploy Grafana Alloy

Grafana Alloy is the recommended, modern telemetry collector for sending metrics, logs, and traces to Grafana Cloud. Alloy supports Prometheus-style metrics collection, log collection, and a rich set of integrations, with a lightweight footprint and configuration.

To learn more about installing and configuring Alloy, see the Grafana Alloy documentation. For collecting logs with Alloy, refer to Collect logs with Alloy.

To roll out Grafana Alloy inside of a Kubernetes cluster, use Kubernetes Monitoring. Kubernetes Monitoring provides you with preconfigured dashboards and alerts to get you started with monitoring quickly.

Was this page helpful?

Related resources from Grafana Labs