Inside the migration from Consul to memberlist at Grafana Labs

At Grafana Labs we run a lot of distributed databases. These distributed databases all make use of a hash ring in order to evenly distribute workloads across replicas of certain components. For a more detailed description of the architecture of our projects, check out our Mimir architecture docs.

This post outlines the process used by our database teams for live migration from using Consul to using embedded memberlist, but this process could be used for migrating from any key-value (KV) store to another KV store.

What is the hash ring?

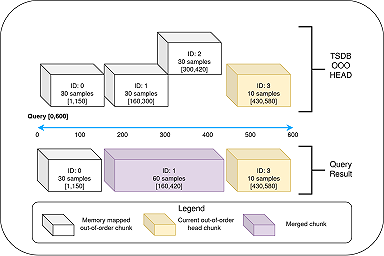

The relevant component that we’ll discuss in this blog post is the hash ring. It’s important to understand that the ring itself isn’t a deployed process, but rather Mimir/Loki services “join” the ring to be given a subset of that ring’s workload (we refer to that subset of the ring as a group of tokens). Scaling up or down a component’s replicas results in recalculating the token sizes and reshuffling of the workload across the new number of replicas.

These rings are backed by key-value stores. In our case, we were using Consul, whereas in the past we had used etcd. However, we were only running a single instance of Consul for reasons outlined in the blog post How we’re (ab)using Hashicorp’s Consul at Grafana Labs.

Replicas of our services periodically use heartbeat messages to maintain their healthy status within the ring. Ring entries, which include all the information known to a particular replica about the members of the ring, are also sent via gossip regularly. There’s a tolerance of multiple missed heartbeats before labeling a replica’s entry in the ring as unhealthy. For example, the backing KV store could be down for a period of time before the liveness of the Mimir/Loki cluster is impacted.

Even after various config tweaks to decrease our “abuse” of Consul, we were still running into the limits of it being able to perform with the load we needed it to. This is partially due to our use of compare-and-swap (CAS) and the number of extra read requests this results in. (The blog post How we’re using gossip to improve Cortex and Loki availability goes into more detail.)

And so, we come to memberlist, the backing library used in Consul that implements a gossip protocol. To remove Consul as a single point of failure, we extended our ring library to make use of memberlist gossiping as the backing key value store. We mitigate the innate “eventually consistent” nature of a gossip protocol by setting configuration defaults that are somewhat tuned to our use case and using a short gossip interval. In addition, it’s rare that we scale up or down a critical component’s replicas often or in large amounts that would result in more lag in ring consistency across the cluster.

Inside the memberlist migration

Next we’ll outline how to migrate from Consul (or another separately deployed KV store) to memberlist. First, here is the configuration used during the migration for Grafana Mimir and Grafana Loki.

The steps are as follows: (For the jsonnet-specific configuration, see our Grafana Mimir migration documentation.

Enable the multi-KV store client for all components using a ring. Set your existing KV store as the primary store and memberlist as the secondary store. Enable memberlist as well. We add a Kubernetes label to all deployments/statefulsets that are using the ring and use this label to create a Kubernetes service that is used for the join members memberlist configuration. This step results in a rollout of all components that use the ring.

Next we enable mirroring of ring write traffic to the secondary store. This ensures that the memberlist KV store will contain all the current ring information when we swap to using only memberlist. This step is changing runtime reloadable config and so it does not require a rollout.

We can now swap the primary and secondary storage for the multi-KV client since memberlist now contains all the necessary ring information. The multi-KV client is written in such a way to know that if you have a primary and secondary store configured, and then change the primary store configuration, it should swap the primary and secondary stores. Again, this step is changing runtime reloadable config and so it does not require a rollout.

At this stage, we can begin to tear down the migration. We turn off the migration config flag, as well as the mirror writes config flag, and set a new flag migration teardown to true. This last flag allows currently running processes to keep their config, not reloading the config that would now tell them to only run memberlist. As processes roll out during this stage, they will pick up the new config and only use memberlist rather than the multi-KV client.

The last step is just to clean up. We can remove all the previous runtime config flags from the runtime reloadable config section:

multikv_migration_enabled, multikv_mirror_enabled, multikv_primary, multikv_secondary multikv_switch_primary_secondary multikv_migration_teardown

Why memberlist labels matter

We would be doing our community a disservice if we didn’t mention the importance of using labels when using embedded memberlist.

In Grafana Cloud, for the sake of simplicity (and in order to run as few open ports as we can), Mimir/Tempo/Loki all run memberlist using the same service name, Kubernetes label, and port. Mostly.

Because of the way Kubernetes works, it is possible for it to reassign the same IP address to pods in different namespaces when pods stop/start. When using the same Kubernetes service name and port, this can result in a pod within a Loki namespace communicating with a pod in the Mimir namespace whose IP previously belonged to a Loki pod within Loki’s ring. This problem can be mitigated with the use of labels in memberlist, ensuring that cross namespace memberlist communication is rejected by the processes running memberlist themselves.

Once you add labels to memberlist gossip messages, each component in a namespace’s ring will not accept messages that don’t have the required label. We set this label config value to k8s cluster.k8s namespace to ensure it is unique across clusters and namespaces.

If you are migrating to memberlist from an existing KV store, you can simply add the configuration to set your memberlist label in step 1 of the earlier migration steps. However, if you are already running memberlist and plan to deploy another Loki instance (or any other database) in the same IP address space you should migrate your existing deployment to use labels.

Migration steps for using labels:

Note: Every step requires a rollout of all running replicas that are using memberlist. You should wait for each rollout to complete before continuing to the next step.

- Add the cluster label config value, but leave it as an empty string

"and set the cluster label verification disabled flag to true. - Set the memberlist cluster label to something unique to that environment.

- Set the cluster label verification disabled flag to false.

Solving an incident with memberlist labels

At Grafana Labs, we use common configuration code in many places, especially among our three database teams (Mimir, Loki, and Tempo). This was also the case as our teams were migrating to memberlist from Consul. We also happen to run multiple deployments of the same database, or of each database, within the same Kubernetes cluster (separated by namespace). The common configuration code contained a default port to use for memberlist communication, and we did not foresee a necessity to use a different port value for each namespace or database. Unfortunately, this led to a production incident.

In managed Kubernetes clusters, it’s possible for pods that start and stop within quick succession to reuse IP addresses across namespaces. In both our internal operations environment as well as one production Kubernetes cluster, this reuse of IP addresses led to pods in namespace A joining the ring in namespace B because the pods in namespace B’s ring are still gossiping their ring contents to that reused IP address.

Luckily, with Grafana Cloud Metrics, Logs, and Traces, we don’t reuse internal tenant IDs across products. Data that was successfully written during the duration of the incident was stored and could later be migrated to the correct blob storage buckets. Unfortunately, because we apply rate limits to internal tenant IDs, some data would have been lost during the incident due to rate limit application on high throughput users.

The long-term resolution of this issue was more complicated. In theory, any production deployment, addition of new pods, OOM crashing of pods, etc., could have resulted in IP reuse and the potential for another conjoined ring. To fix the problem, we halted production deploys for a few days and investigated potential fixes. One idea was to make use of different ports in each namespace. The problem was that, at the time, there was no support from the underlying memberlist library and client to run memberlist on multiple ports within the same process. Another is that we would need to write additional checks into our configuration repositories CI to enforce different ports being configured for each cluster/namespace combination.

The fix we chose to move forward with was migrating every memberlist cluster to make use of memberlist labels, as described in the previous section. This meant that while there was still a slim chance of memberlist communication between namespaces due to IP reuse, pods in different namespaces would reject all memberlist communication from each other.

Conclusion

As mentioned in the blog post How we’re using gossip to improve Cortex and Loki availability, there are a few benefits to moving away from a dedicated KV store to embedded memberlist.

First, we’ve decoupled the updating of the ring from the network messages that communicate the ring state. This means that there is no network call required for each update, reducing network traffic. Each individual message is also smaller than before.

Second, we’ve been able to remove the KV store deployment as a single point of failure in our deployment model. In various production environments we were reaching the limit of what our KV store deployments could handle, even with various workarounds we implemented. (This is mentioned in the blog post How we’re (ab)using Hashicorps Consul at Grafana Labs.)

The migration to memberlist will allow us continue to scale production environments further and introduce additional deployment model improvements to more efficiently and safely scale components that make use of the ring.

I want to extend a big thank you to the Grafana Mimir team, who implemented our internal memberlist libraries, put together the initial migration steps that the Loki team based our own migrations on, and for their reviews of this blog post.