How Istio, Tempo, and Loki speed up debugging for microservices

Antonio Berben is a Field Engineer working for solo.io on Gloo Mesh (with Istio) and Gloo Edge (with Envoy). He is a Kubernetes enthusiast and his favorite quote is: “I will go anywhere, provided it be forward” from David Livingstone. You can find and follow Antonio on Twitter.

“How am I supposed to debug this?"

Just imagine: Late Friday, you are about to shut down your laptop and … an issue comes up. Warnings, alerts, red colors. Everything that we, developers, hate the most.

The architect decided to develop that system based on microservices. Hundreds of them! You, as a developer, think why? Why does the architect hate me so much? And then, the main question of the moment: How am I supposed to debug this?

Of course, we all understand the benefits of a microservice architecture. But we also hate the downsides. One of those is the process of debugging or running a postmortem analysis across hundreds of services. It is tedious and frustrating.

Here is an example:

-Where do I start? You think, and you select the obvious candidate to start with the analysis: app1*. And you read the logs shrinking by time period:*

kubectl logs -l app=app1 --since=3h […]-Nothing. Everything looks normal. You think, maybe the problem came from this other related service. And again:

kubectl logs -l app=app2 --since=3h […]-Aha! I see something weird here. This app2 has run a request to this other application, app3*. Let’s see. And yet again:*

kubectl logs -l app=app3 --since=3h […]Debugging takes ages. There is a lot of frustration until, finally, one manages to figure things out.

As you can see, the process is slow. Quite inefficient. Some time ago, we did not have logging and traceability in place.

Today, the story can be different. With Grafana Loki, Grafana Tempo, and other tools, we can debug things almost instantly.

Traceability: the feature you need

In order to be able to debug quickly, you need to mark the request with a unique ID. The mark is called Trace ID. And all the elements involved in the request, add another unique ID called span.

At the end of the road, you are able to filter out the exact set of traces involved in the request which produced the issue.

And not only that. You can also draw all this in a visualization to easily understand the pieces which compose your system.

How to do this with the Grafana Stack

You are going to do a task simulating a microservices system with a service mesh: Istio.

You will say: Istio offers observability through Kiali.

For observability, Istio relies on Kiali. However, in the world of microservices, we should always show that there are alternatives that could fit the requirements, at least as good as the default ones.

Grafana Tempo and Grafana Loki are good examples of well-done logging and tracing backends. Regardless the performance comparisons, good points to consider are the following ones:

- Tempo and Loki both integrate with S3 buckets to store the data. This relieves you from maintaining and indexing storage that, depending on your requirements, might not be needed.

- Tempo and Loki are part of Grafana. Therefore, it integrates seamlessly with Grafana dashboards. (Let’s be honest here: We all love and use Grafana dashboards.)

Now, let’s see how you can speed up the debugging process with Istio and the Grafana Stack.

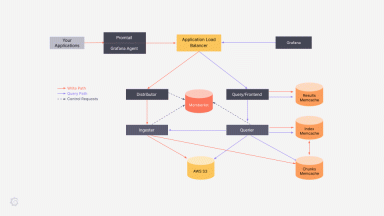

This will be your architecture:

Hands on!

Prerequisites

Prepare Istio

You need to have Istio up and running.

Let’s install the Istio operator:

istioctl operator initNow, let’s instantiate the service mesh. Istio proxies include a traceID in the x-b3-traceid. Notice that you will set the access logs to inject that trace ID as part of the log message:

kubectl apply -f - << 'EOF'

apiVersion: install.istio.io/v1alpha1

kind: IstioOperator

metadata:

name: istio-operator

namespace: istio-system

spec:

profile: default

meshConfig:

accessLogFile: /dev/stdout

accessLogFormat: |

[%START_TIME%] "%REQ(:METHOD)% %REQ(X-ENVOY-ORIGINAL-PATH?:PATH)% %PROTOCOL%" %RESPONSE_CODE% %RESPONSE_FLAGS% %RESPONSE_CODE_DETAILS% %CONNECTION_TERMINATION_DETAILS% "%UPSTREAM_TRANSPORT_FAILURE_REASON%" %BYTES_RECEIVED% %BYTES_SENT% %DURATION% %RESP(X-ENVOY-UPSTREAM-SERVICE-TIME)% "%REQ(X-FORWARDED-FOR)%" "%REQ(USER-AGENT)%" "%REQ(X-REQUEST-ID)%" "%REQ(:AUTHORITY)%" "%UPSTREAM_HOST%" %UPSTREAM_CLUSTER% %UPSTREAM_LOCAL_ADDRESS% %DOWNSTREAM_LOCAL_ADDRESS% %DOWNSTREAM_REMOTE_ADDRESS% %REQUESTED_SERVER_NAME% %ROUTE_NAME% traceID=%REQ(x-b3-traceid)%

enableTracing: true

defaultConfig:

tracing:

sampling: 100

max_path_tag_length: 99999

zipkin:

address: otel-collector.tracing.svc:9411

EOFInstall the demo application

Let’s create the namespace and label it to auto-inject the Istio proxy:

kubectl create ns bookinfo

kubectl label namespace bookinfo istio-injection=enabled --overwriteAnd now the demo application bookinfo:

kubectl apply -n bookinfo -f https://raw.githubusercontent.com/istio/istio/release-1.10/samples/bookinfo/platform/kube/bookinfo.yamlTo access the application through Istio, you need to configure it. It requires a Gateway and a VirtualService:

kubectl apply -f - << 'EOF'

apiVersion: networking.istio.io/v1alpha3

kind: Gateway

metadata:

name: bookinfo-gateway

namespace: bookinfo

spec:

selector:

istio: ingressgateway # use istio default controller

servers:

- port:

number: 80

name: http

protocol: HTTP

hosts:

- "*" # Mind the hosts. This matches all

---

apiVersion: networking.istio.io/v1alpha3

kind: VirtualService

metadata:

name: bookinfo

namespace: bookinfo

spec:

hosts:

- "*"

gateways:

- tracing/bookinfo-gateway

- bookinfo-gateway

http:

- match:

- uri:

prefix: "/"

route:

- destination:

host: productpage.bookinfo.svc.cluster.local

port:

number: 9080

EOFTo access the application, let’s open a tunnel to the Istio ingress gateway (the entry point to the mesh):

kubectl port-forward svc/istio-ingressgateway -n istio-system 8080:80Now, you can access the application through the browser: http://localhost:8080/productpage

Install Grafana Stack

Now, let’s create the Grafana components. Let’s start with Tempo, the tracing backend we have mentioned before:

kubectl create ns tracing

helm repo add grafana https://grafana.github.io/helm-charts

helm repo update

helm install tempo grafana/tempo --version 0.7.4 -n tracing -f - << 'EOF'

tempo:

extraArgs:

"distributor.log-received-traces": true

receivers:

zipkin:

otlp:

protocols:

http:

grpc:

EOFNext component? Let’s create a simple deployment of Loki:

helm install loki grafana/loki-stack --version 2.4.1 -n tracing -f - << 'EOF'

fluent-bit:

enabled: false

promtail:

enabled: false

prometheus:

enabled: true

alertmanager:

persistentVolume:

enabled: false

server:

persistentVolume:

enabled: false

EOF

Now, let’s deploy Opentelemetry Collector. You use this component to distribute the traces across your infrastructure:

kubectl apply -n tracing -f https://raw.githubusercontent.com/antonioberben/examples/master/opentelemetry-collector/otel.yaml

kubectl apply -n tracing -f - << 'EOF'

apiVersion: v1

kind: ConfigMap

metadata:

name: otel-collector-conf

labels:

app: opentelemetry

component: otel-collector-conf

data:

otel-collector-config: |

receivers:

zipkin:

endpoint: 0.0.0.0:9411

exporters:

otlp:

endpoint: tempo.tracing.svc.cluster.local:55680

insecure: true

service:

pipelines:

traces:

receivers: [zipkin]

exporters: [otlp]

EOFFollowing component is fluent-bit. You will use this component to scrap the log traces from your cluster:

(Note: In the configuration you are specifying to take only containers which match the following pattern /var/log/containers/*istio-proxy*.log)

helm repo add fluent https://fluent.github.io/helm-charts

helm repo update

helm install fluent-bit fluent/fluent-bit --version 0.16.1 -n tracing -f - << 'EOF'

logLevel: trace

config:

service: |

[SERVICE]

Flush 1

Daemon Off

Log_Level trace

Parsers_File custom_parsers.conf

HTTP_Server On

HTTP_Listen 0.0.0.0

HTTP_Port {{ .Values.service.port }}

inputs: |

[INPUT]

Name tail

Path /var/log/containers/*istio-proxy*.log

Parser cri

Tag kube.*

Mem_Buf_Limit 5MB

outputs: |

[OUTPUT]

name loki

match *

host loki.tracing.svc

port 3100

tenant_id ""

labels job=fluentbit

label_keys $trace_id

auto_kubernetes_labels on

customParsers: |

[PARSER]

Name cri

Format regex

Regex ^(?<time>[^ ]+) (?<stream>stdout|stderr) (?<logtag>[^ ]*) (?<message>.*)$

Time_Key time

Time_Format %Y-%m-%dT%H:%M:%S.%L%z

EOFNow, the Grafana query. This component is already configured to connect to Loki and Tempo:

helm install grafana grafana/grafana -n tracing --version 6.13.5 -f - << 'EOF'

datasources:

datasources.yaml:

apiVersion: 1

datasources:

- name: Tempo

type: tempo

access: browser

orgId: 1

uid: tempo

url: http://tempo.tracing.svc:3100

isDefault: true

editable: true

- name: Loki

type: loki

access: browser

orgId: 1

uid: loki

url: http://loki.tracing.svc:3100

isDefault: false

editable: true

jsonData:

derivedFields:

- datasourceName: Tempo

matcherRegex: "traceID=(\\w+)"

name: TraceID

url: "$${__value.raw}"

datasourceUid: tempo

env:

JAEGER_AGENT_PORT: 6831

adminUser: admin

adminPassword: password

service:

type: LoadBalancer

EOFTest it

After the installations are completed, let’s open a tunnel to the Grafana query forwarding the port:

kubectl port-forward svc/grafana -n tracing 8081:80Access it using the credentials you have configured when you installed it:

- user: admin

- password: password

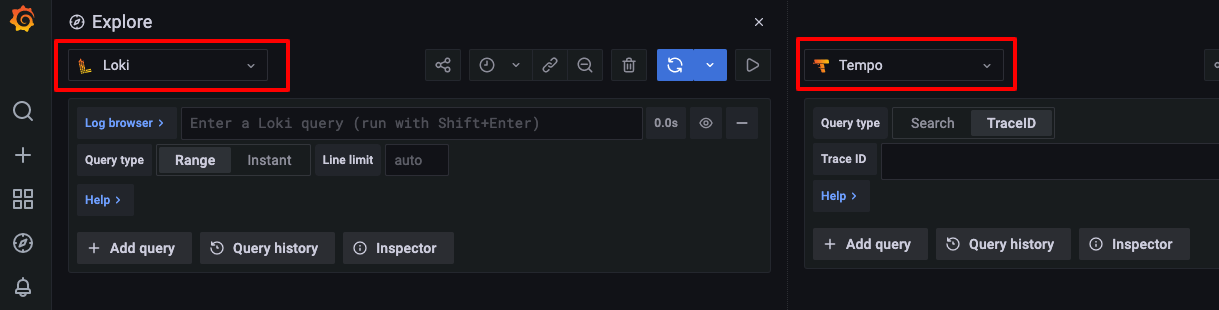

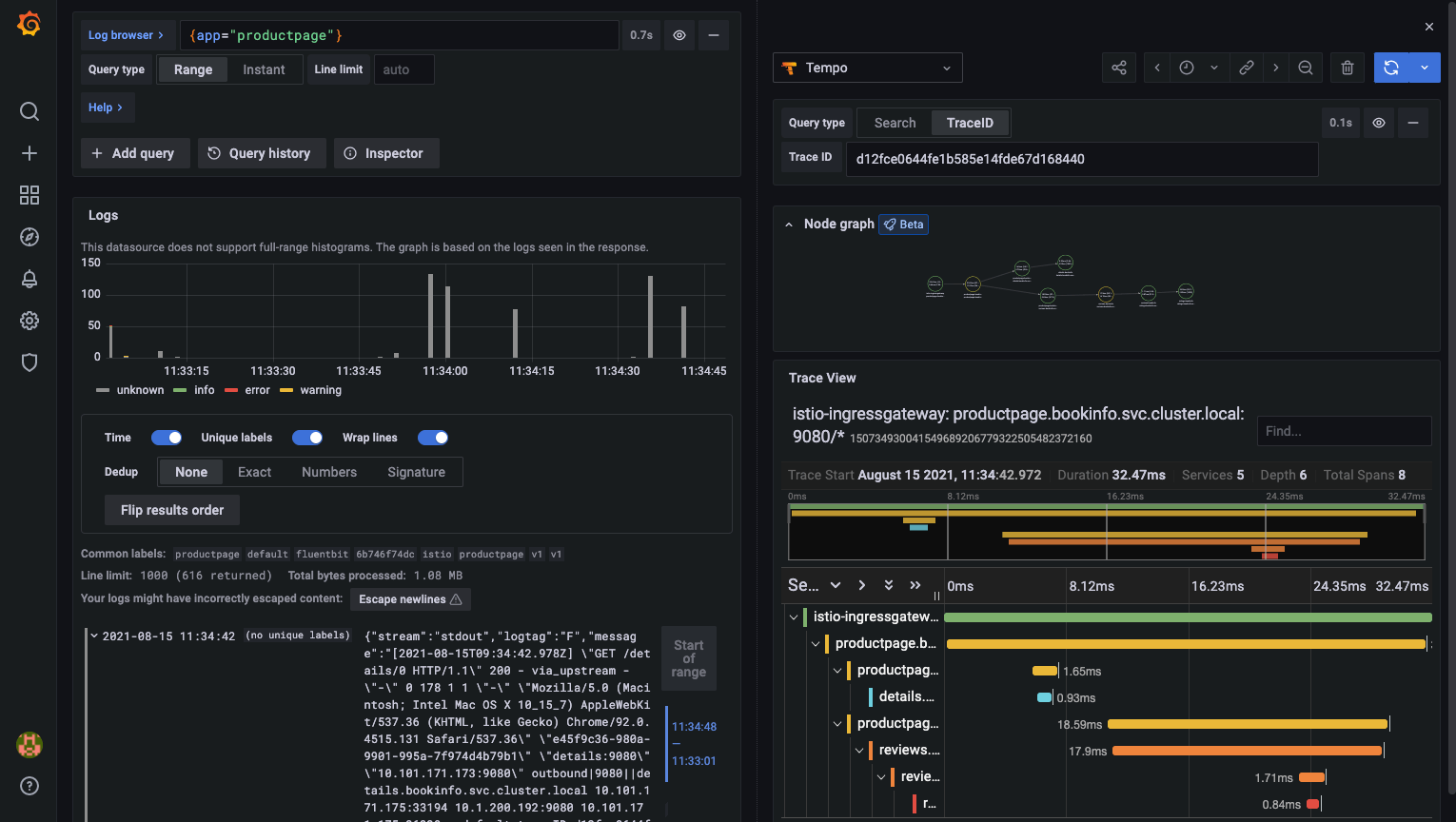

You are prompted to the Explore tab. There, you can select Loki to be displayed on one side and then click on split to choose Tempo to be displayed in the other side:

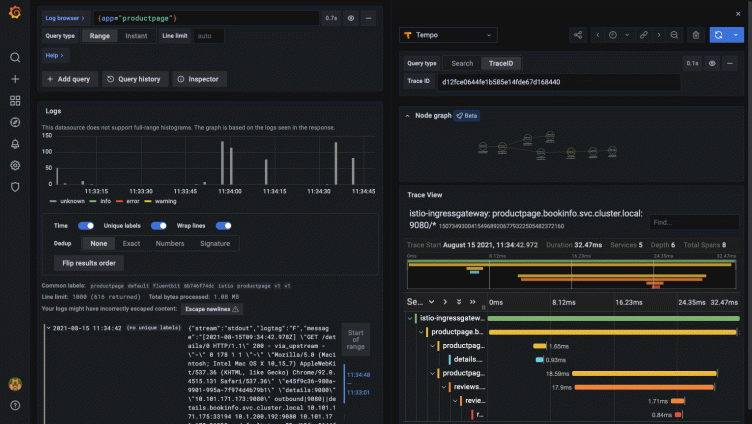

You will see something like this:

Finally, let’s create some traffic with the tunnel we already created to bookinfo application:

http://localhost:8080/productpage

Refresh (hard refresh to avoid cache) the page several times until you can see traces coming into Loki. Remember to add the filter to ProductPage to see its access log traces:

Click on the log and a Tempo button is shown:

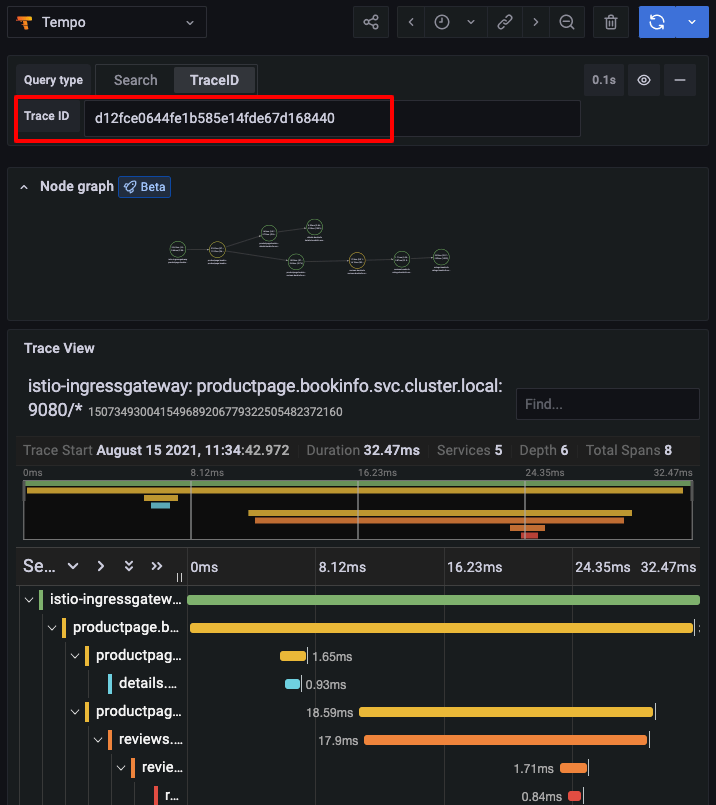

Immediately, the TraceID will be passed to the Tempo dashboard displaying the traceability and the diagram:

Final thoughts

Having a diagram which displays all elements involved in a request through microservices increases the speed to find bugs or to understand what happened in your system when running a postmortem analysis.

By reducing that time, you increase efficiency so that your developers can keep working and producing more business requirements.

In my personal opinion, that is the key point: Increase business productivity.

Traceability and, in this case, the Grafana Stack helps you accomplish that.

Now, let’s make it production ready.

The easiest way to get started with Tempo and Loki is on Grafana Cloud. We have free (50GB of traces and logs included) and paid Grafana Cloud plans to suit every use case — sign up for free now.