Introducing Grafana Cloud Agent, a remote_write-focused Prometheus agent that can save 40% on memory usage

Today, we are announcing the Grafana Cloud Agent (Ed. note: now called Grafana Agent), a subset of Prometheus built for hosted metrics that runs lean on memory and uses much of the same battle-tested code that has made Prometheus so awesome.

At Grafana Labs, we love Prometheus. We deploy it for our internal monitoring, use it alongside Alertmanager, and have it configured to send its data to Cortex via remote_write. Unfortunately, as we scale to handle more than 4 million active series in a single Prometheus, our deployment becomes more and more difficult to manage. While most organizations don’t have this many series, we increasingly need to vertically scale the node that runs Prometheus to deal with growing memory usage.

Its large memory requirements lead to it being a single risky point of failure. If it runs out of memory, we’re not monitoring our infrastructure anymore. A common solution is to start sharding your Prometheus instances, but we prefer operating one Prometheus instance per cluster, and properly adding sharding can be non-trivial. In general, we need to watch Prometheus very carefully to keep our monitoring system running.

This is a common problem for organizations running Prometheus at the same massive scale, and feels like even more of a burden when using a hosted metrics solution such as Cortex. Users of Cortex don’t need local storage and querying; data is already sent to Cortex through remote_write, and there’s a Prometheus-compatible query API. Additionally, if it were easier to shard our cluster-wide Prometheus configuration, we wouldn’t have the same scaling problems mentioned previously. And so we created the Grafana Cloud Agent, built to simplify running a Prometheus-style infrastructure when using a hosted metrics solution.

What is it?

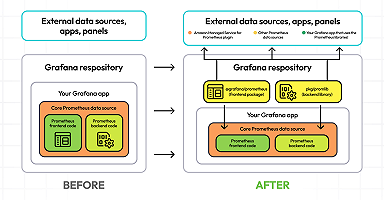

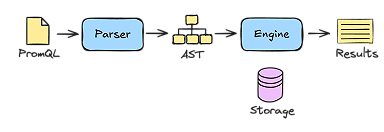

As the title of this post suggests, the Grafana Cloud Agent is a subset of Prometheus without any querying or local storage, using the same service discovery, relabeling, WAL, and remote_write code found in Prometheus. Thanks to trimming down to the parts only needed for interaction with Cortex, tests of our first release have seen up to a 40% memory-usage reduction compared to an equivalent Prometheus process.

The agent can be deployed with or without something we’re calling host filtering. Host filtering, when enabled, ignores any discovered targets that are discovered on a different machine from the one the agent is running on. This is particularly useful when deploying the Agent on Kubernetes as a DaemonSet: Each Agent will only scrape and send metrics from pods that run on the same node as the agent. This allows for distributing the memory usage across your cluster as opposed to on one node, though this also means that if the node itself starts experiencing problems, metrics that show those issues won’t be delivered to the remote_write and alerting system.

Regardless of whether host filtering is enabled, migrating to the Grafana Cloud Agent is relatively painless, with only a little bit of migration work required. We’ve written a short guide to explain how to configure the Agent from an existing Prometheus config.

Can I use it without Grafana Cloud?

Despite the similarity in name to Grafana Cloud, our hosted platform for metrics and logs, you can use the Grafana Cloud Agent with any platform that supports the Prometheus remote_write API. In the future, we’re planning on bundling optional features that make the Agent work seamlessly with Grafana Cloud, but it will always be possible to use it with any remote_write-compatible service.

Another agent?

Before we ventured off to release yet another agent into the world, we took a hard look at what was available today. While other collection agents could be used to collect Prometheus metrics, they tend to have the same problem. These agents don’t feel like Prometheus, missing features we rely on like service discovery or relabeling rules. In the end, the alternative solutions out there felt like a jack of all trades, master of none.

We’re taking a different approach. By focusing directly on Prometheus, we can make it feel like a first-class citizen. We want users to make fewer compromises with our Agent compared to others to reduce friction when migrating to the Agent. In short, while other Agents do support scraping Prometheus-style metrics, our Agent feels like Prometheus because it is based on Prometheus.

Trade-offs

By optimizing the Grafana Cloud Agent to reduce resource requirements, we had to make some trade-offs and remove some capabilities that are standard in Prometheus. Most importantly, the Agent has no support for recording or alerting rules. At first glance, this seems completely contradictory to the primary purpose of Prometheus (which is, of course, to alert). However, we now support recording rules and alerts within Cortex and Grafana Cloud. This helps balance the decision to remove support from within the Agent, though it also bottlenecks the reliability of your alerts to the reliability of the hosted solution.

Roadmap

We’re planning on extending the Grafana Cloud Agent to collect all types of data that are supported by Grafana Cloud. Support for both Graphite and Loki will eventually be included in the Agent.

Simultaneously, we’re planning on another deployment mode for the Agent that we’ve been calling the scraping service mode, in which a cluster of Agents will communicate and balance scraping load between other Agents in the cluster. This will help solve some of the trade-offs mentioned earlier in this blog post, such as regaining the ability to capture metrics from problematic nodes when sharding the agent. Keep an eye out for more information on that in the future.

Give it a try!

Docker images and static binaries are available on the GitHub releases page. We’ve been happy with the benefits the Agent has given us, and we’re excited for others to try it, too. Let us know what you think!

Learn more about Cortex

If you’re interested in finding out more about Cortex, sign up for our webinar scheduled for March 31 at 9:30am PT. There will be a preview of new features in the upcoming Cortex 1.0 release, a demonstration, and Q&A with Cortex maintainers Tom Wilkie and Goutham Veeramachaneni.