How Grafana Labs Is Running Jaeger at Scale with Prometheus and Envoy

At Grafana Labs, we are always working on optimizing query time on our Grafana Cloud hosted metrics platform, which incorporates our Metrictank Graphite-compatible metrics service, and Cortex, the open source project for multitenant, horizontally scalable Prometheus-as-a-Service.

I hack on Cortex and help run it in production. But as we started adding scale – we regularly deal with hundreds of concurrent queries per second – we noticed poor query performance. We found ourselves adding new metrics on each rollout to test our theories, many times shooting in the dark, only to have our assumptions invalidated after a lot of experimentation.

We then decided to turn to Jaeger, a CNCF project for distributed tracing, and things instantly improved. Query performance improved by as much as 10x. This post will take a deep dive into how we deploy and use Jaeger for maximum benefit.

Merging the Pillars!

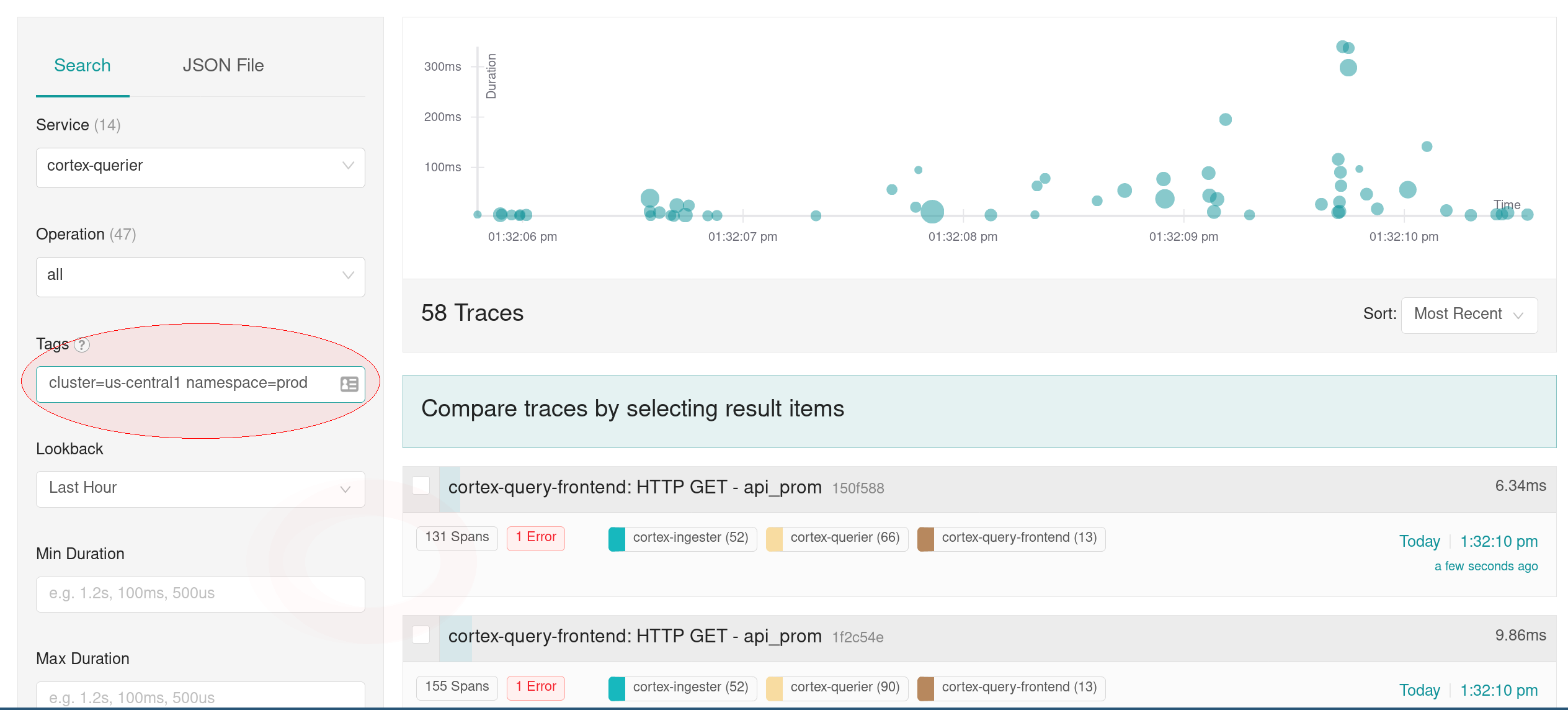

Jaeger tends to dump a lot of data on you, and you don’t necessarily know what you’re looking for. For example, if you have slow queries in only one environment, the Jaeger UI doesn’t actually tell you which particular tags in which particular environment are slow.

We use Prometheus to enable drilling down into specific traces. We have RED dashboards that highlight which services are slow, and when we see a spike, we can tell what environment it’s in. We can then go to the Jaeger UI to select the traces in the particular time range and environment (identified by namespace + cluster for us), and investigate which functions are taking too long, whether we’re making too many calls, if we could batch calls together, etc. We can then verify the impact of using Jaeger and Prometheus after rolling out the changes.

To enable this, we add the namespace and cluster tags to the env variables. We even published the jsonnet we use to add the tags:

querier_container+::

$.jaeger_mixin,If you’re using the jaeger-operator, you will be able to get the same feature in the next release ;)

This lets us attach the right tags with just a single line, and using the Prometheus labels we get in our alerts, we can drill down to the right traces:

Now at the scale we were running, our 99%iles were great, but specific users were seeing the occasional slow query, and it was hard to find those traces. To track those down, we started printing the trace-ids in the logs and then created regular spreadsheets of the slowest queries. We then could directly jump to our slowest queries in Jaeger from these sheets.

How We Scale Jaeger

We run Jaeger a bit differently from most other organizations. We have a central Jaeger cluster that collects all the spans, and have 2 agents in each of our 7+ clusters sending to the central collectors. When we first started using Jaeger, the communication between the Agent and the Collector was using TChannel by default, which wasn’t able to load balance the traffic. Each agent has a long-lived connection to a single collector and sends the spans there. This means if a cluster is sending too many spans, the agents will connect to only a couple of collectors and overwhelm them – resulting in dropping of spans. Adding more collectors doesn’t help as the new collectors weren’t being contacted by the loaded agents.

We were able to resolve those issues by using the new gRPC changes in Jaeger coupled with Envoy, another CNCF project, for load balancing.

How We Monitor Jaeger

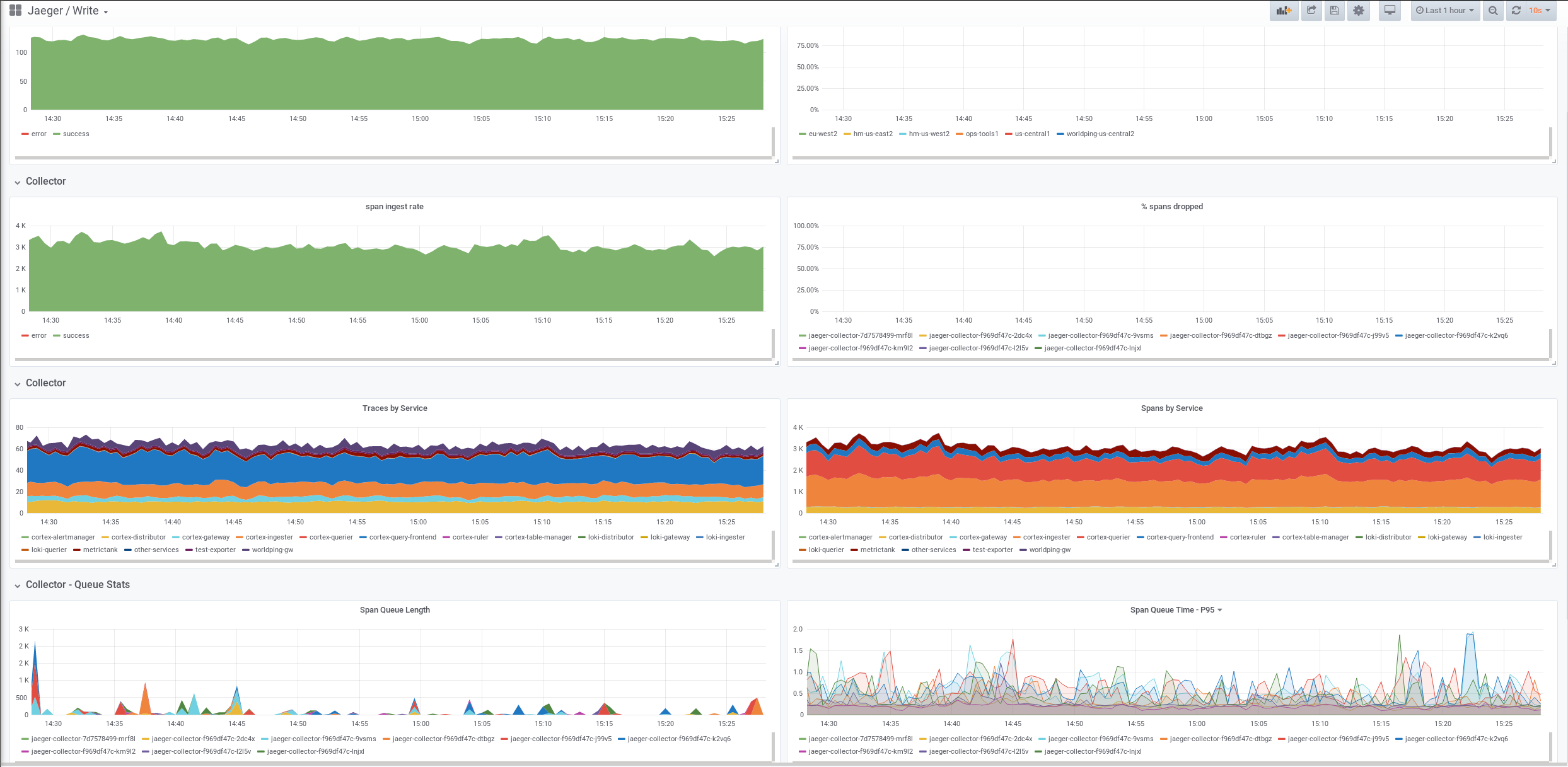

When we set up Jaeger and started running it, we wanted to see if it was working or not. Under heavy load with the original lack of load balancing, we found that we were actually dropping the data (spans). We wanted to know where we were dropping the data, what service was producing how much data, etc., but there were no dashboards or alerts to do this.

So I built a Jaeger mixin, which includes recording rules, dashboards, and alerts, and that gave us a lot of visibility. We’ve since donated the Jaeger mixin to the community.

What’s Next

We have a lot of plans around the tracing ecosystem and are super looking forward to implementing them. Some of the more immediate things are exemplars in Prometheus. Right now there is no way to jump right to Jaeger from the dashboards. For example, if you see the 99%ile jump to 5 secs during a spike, ideally you just want to click on the spike and jump to the trace of the request that caused the latency spike. We are working on implementing this in Prometheus, where Prometheus will also store the trace-ids associated with a histogram bucket. Once implemented, this will be one of the first open-source implementations of exemplars.

Another thing we are interested in is scalable tail-based sampling and dynamic filters for traces. Initially we used to trace 100% of the requests so that we don’t miss anything interesting, but as our query load increased from high 10s of rps to high 100s of rps, we started to store only a fourth of our queries. We are super interested in the OpenTelemetry Service, which lets us set the sampling frequency based on the duration of the requests and particular tags (tail-based sampling). We are exploring ways in which it can be scaled horizontally in tail-based sampling mode, and contributing it back to the community.